This post is part of the lecture notes of my class “Introduction to Online Learning” at Boston University, Fall 2019. I will publish two lectures per week.

You can find the lectures I published till now here.

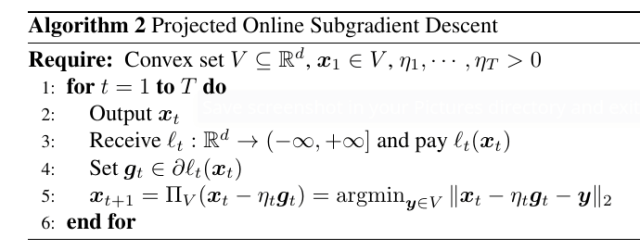

Last time, we introduced Projected Online Gradient Descent:

And we proved the following regret guarantee:

Theorem 1 Let

a closed non-empty convex set with diameter

, i.e.

. Let

an arbitrary sequence of convex functions

differentiable in open sets containing

for

. Pick any

assume

. Then,

, the following regret bound holds

Moreover, if

is constant, i.e.

, we have

However, the differentiability assumption for the is quite strong. What happens when the losses are convex but not differentiable? For example

. Note that this situation is more common than one would think. For example, the hinge loss,

, and the ReLU activation function used in neural networks,

, are not differentiable. It turns out that we can just use Online Gradient Descent, substituting the subgradients to the gradients. For this, we need some more convex analysis!

1. Convex Analysis Bits: Subgradients

First, we need a technical definition.

Definition 2 If a function

is nowhere

and finite somewhere, then

is called proper.

In these class, we are mainly interested in convex proper functions, that basically better conform to our intuition of what a convex function looks like.

Let’s first define formally what is a subgradient.

Definition 3 For a proper function

, we define a subgradient of

in

as a vector

that satisfies

Basically, a subgradient of in

is any vector

that allows us to construct a linear lower bound to

. Note that the subgradient is not unique, so we denote the set of subgradients of

in

by

, called subdifferential of

at

.

Observe that if is proper and convex, then

is empty for

, because the inequality cannot be satisfied when

. Also, the domain of

, denoted by

, is the set of all

such that

is nonempty; it is a subset of

. A proper convex function

is always subdifferentiable in

(Rockafellar, R. T., 1970, Theorem 23.4). If the function is convex, differentiable in

, and

is finite in

, we have that the subdifferential is composed by a unique element equal to

(Rockafellar, R. T., 1970, Theorem 25.1).

Also, we can also calculate the subgradient of sum of functions.

Theorem 4 (Rockafellar, R. T., 1970, Theorem 23.8,Bauschke, H. H. and Combettes, P. L., 2011, Corollary 16.39) Let

be proper convex functions on

, and

. Then

. If

, then actually

.

Example 1 Let

, then the subdifferential set

is

Example 2 Let’s calculate the subgradient of the indicator function for a non-empty convex set

. By definition,

if

This condition implies that

and

(because for

the inequality is always verified). The set of all

that satisfies the above inequality is called the normal cone of

at

. Note that the normal cone for any

(Hint: take

). For example, for

,

for all

.

Another useful theorem is to calculate the subdifferential of the pointwise maximum of convex functions.

Theorem 5 (Bauschke, H. H. and Combettes, P. L., 2011, Theorem 18.5) Let

be a finite set of convex functions from

to

and suppose

and

continuous at

. Set

and let

the set of the active functions. Then

Example 3 (Subgradients of the Hinge loss) Consider the loss

for

. The subdifferential set if

Definition 6 Let

is

-Lipschitz over a set

w.r.t a norm

if

.

We also have this handy result that upper bounds the norm of subgradients of convex Lipschitz functions.

Theorem 7 Let

proper and convex. Then,

is

-Lipschitz in

w.r.t. the L2 norm iff for all

and

we have

.

Proof: Assume

-Lipschitz, then

. For

small enough

, then

that implies that .

For the other implication, the definition of subgradient and Cauchy-Schwartz inequalities gives us

for any . Taking

, we also get

that completes the proof.

2. Analysis with Subgradients

As I promised you, with the proper mathematical tools, the analyzing online algorithms becomes easy. Indeed, switching from gradient to subgradient comes for free! In fact, our analysis of OGD with differentiable losses holds as is using subgradients instead of gradients. The reason is that the only property of the gradients that we used in our proof was that

where . However, the exact same property holds when

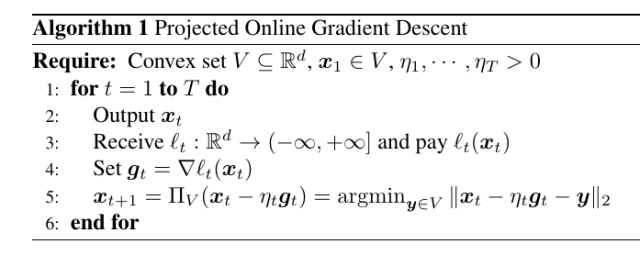

. So, we can state the Online Subgradient descent algorithm in the following way, where the only difference is line 4.

Also, the regret bounds we proved holds as well, just changing differentiability with subdifferentiability and gradients with subgradients.

3. From Convex Losses to Linear Losses

Let’s take a deeper look at this step

And summing over time, we have

Now, define the linear (and convex) losses , so we have

This is more powerful that what it seems: We upper bounded the regret with respect to the convex losses with a regret with respect to another sequence of linear losses. This is important because it implies that we can build online algorithms that deal only with linear losses, and through the reduction above they can be seamlessly used as OCO algorithms! Note that this not imply that this reduction is always optimal, it isn’t! But, it allows us to easily construct optimal OCO algorithms in many interesting cases.

So, we will often consider just the problem of minimizing the linear regret

This problem is called Online Linear Optimization (OLO).

Example 4 Consider the guessing game of the first class, we can solve easily it with Online Gradient Descent. Indeed, we just need to calculate the gradients, prove that they are bounded, and find a way to calculate the projection of a real number in

So,

, that is bounded for

. The projection on

is just

. With the optimal learning rate, the resulting regret would be

. This is worse than the one we found in the first class, showing that the reduction not always gives the best possible regret.

Example 5 Consider again the guessing game of the first class, but now change the loss function to the absolute loss of the difference:

. Now we will need to use Online Subgradient Descent, because the functions are non-differentiable. We can easily see that

Again, running Online Subgradient Descent with the optimal learning rate on this problem will give us immediately a regret of

, without having to think to a particular strategy for it.

4. Online-to-Batch Conversion

It is a good moment to take a break from online learning theory and see some application of online learning to other domains. For example, we may wonder what is the connection between online learning and stochastic optimization. Given that Projected Online (Sub)Gradient Descent looks basically the same as Projected Stochastic (Sub)Gradient Descent, they must have something in common. Indeed, we can show that, for example, we can reduce stochastic optimization of convex functions to OCO. Let’s see how.

Theorem 8 Let

where the expectation is w.r.t.

drawn from

over some vector space

and

is convex in the first argument. Draw

samples

i.i.d. from

and construct the sequence of losses

, where

are deterministic. Run any OCO algorithm over the losses

, to construct the sequence of predictions

. Then, we have

where the expectation is with respect to

.

We will prove it next time, let’s see now an application for it: Let’s now see how to use the above theorem to transform Online Subgradient Descent in Stochastic Subgradient Descent to minimize the training error of a classifier.

Example 6 Consider a problem of binary classification, with inputs

and outputs

. The loss function is the hinge loss:

. Suppose that you want to minimize the training error over a training set of

samples,

. Also, assume the maximum L2 norm of the samples is

. That is, we want to minimize

Run the reduction described in Theorem 8 for

iterations using OGD. In each iteration, construct

sampling a training point uniformly at random from

to

. Set

and

. We have that

In words, we used an OCO algorithm to stochastically optimize a function, transforming the regret guarantee into a convergence rate guarantee.

Next time we will prove the Online-to-Batch theorem and show many more examples on how to use it.

5. History Bits

The specific shape of Theorem 8 is new, but I wouldn’t be surprised if it appeared somewhere in the literature. In particular, the uniform averaging is from (N. Cesa-Bianchi and A. Conconi and Gentile, C. , 2004), but was proposed for the absolute loss in (Blum, A. and Kalai, A. and Langford, J., 1999). The non-uniform averaging, that we will use next time, is from (Zhang, T., 2004), even if there it is not proposed explicitly as a online-to-batch conversion.

6. Exercises

Exercise 1 Calculate the subdifferential set of the

-insensitive loss:

. It is a loss used in regression problems where we don’t want to penalize predictions

within

of the correct value

.

Exercise 2 Consider Projected Online Subgradient Descent for the example in the previous lecture about the failure of Follow-the-Leader: Can we use it on that problem? Would it guarantee sublinear regret? How the behaviour of the algorithm would differ from FTL?

Exercise 3 Implement the algorithm in 6 in any language you like: implementing an algorithm is the perfect way to see if you understood all the details of the algorithm.

Hi, in definition 6 for the Lipshitz functions I think there is a missing $L$ in the equation: {|f({\boldsymbol x})-f({\boldsymbol y})|\leq \|{\boldsymbol x}-{\boldsymbol y}\|

Also, in Example 6 it’s written “training set of N samples {\{({\boldsymbol x}_i,y_i)\}_{i=1}^N}”, but we said before that our inputs are {\boldsymbol z}_i \in R^d

I think I’m missing something in the second implication of Theorem 7, i.e. assuming {\|{\boldsymbol g}\|_2\leq L} then the function is $L$-Lipshitz. If we use the definition of subgradient we get

\displaystyle f({\boldsymbol x})-f({\boldsymbol y}) \leq \langle {\boldsymbol g}, \|{\boldsymbol x}-{\boldsymbol y}

why does it hold for the absolute value as well?

Thanks,

Nicolò

LikeLike

Thanks for finding the typos! I fixed them now.

For the proof of Theorem 7, I was indeed a bit sloppy. I changed the proof: you just do the same reasoning twice, swapping the role of x and y. Does it work now?

LikeLike

Right, thank you!

LikeLiked by 1 person

In example 6, shouldn’t the sqrt(T) be in the numerator and not the denominator?

Thanks!

LikeLike

I think it is correct: the regret is sqrt{T} and the online-to-batch conversion divides the regret by T, right?

LikeLike