This time we consider an application of Online Mirror Descent (OMD) to the problem of prediction with multi-scale expert advice.

In this setting, at each time step , an algorithm selects a probability distribution

over a set of

experts (or actions). After observing a loss vector

, the algorithm incurs a loss of

. The goal is to minimize the regret with respect to the best single expert in hindsight. However, unlike in the usual learning with expert advice setting, here the losses associated with different experts can have vastly different scales. For example, one expert might correspond to a low-risk, low-reward strategy with losses in

, while another might be a high-risk, high-reward strategy with losses in

. Thus, we would like to prove a regret guarantee that depends on the range of the best coordinate.

A standard and effective algorithm for this problem is the Exponentiated Gradient (EG) algorithm, which we saw previously as an instance of OMD. The EG algorithm has a regret bound that scales with the largest range of the loss coordinates, so it is not suitable for this problem. We need a different algorithm.

In this setting, we assume that we have prior knowledge of the approximate scale of losses for each expert. Specifically, for each expert , we know a value

such that the loss

is guaranteed to be in the range

for all

. The goal is to leverage this scale information to achieve better regret bounds.

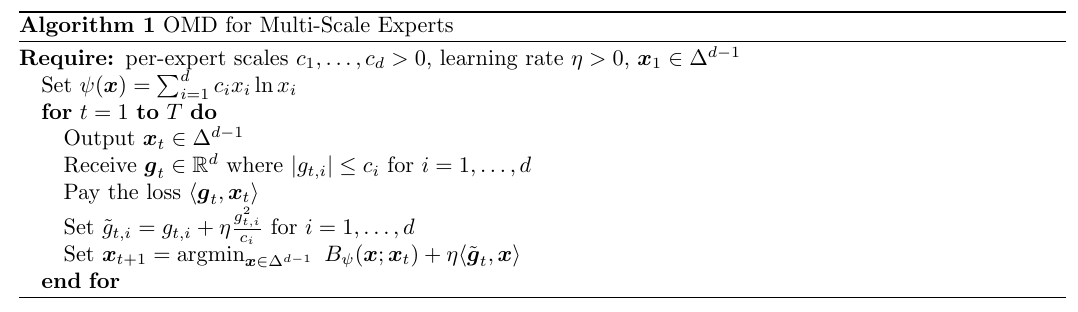

Here, we derive an algorithm for this setting as an instance of OMD with a specific choice of surrogate losses. In particular, we run OMD with distance generating function on the shifted losses

, see Algorithm 1.

Theorem 1. Let

such that

for all

and

. In Algorithm 1, set

. Then, we have

Using the local norm regret upper bound for OMD, we obtain

where is between

and

. Using (1), we have that

So, we can upper bound each with

.

Using again (1), we have that the right hand side of the regret upper bound becomes

Observe that , so we have

So, we obtain

Simplifying, we have the stated result.

Let’s now see how we can choose the prior and the initial point

. First, let’s calculate the value of the Bregman divergence:

Define ,

, and

to be any index in

. If we set

, we have

Now, set for

and

. Also, set

. In this way, for

, we have

On the other hand, for , we have

Hence, the previous bound also holds for all . In this case, choosing

, we have

Note that if then

, so we recover the bound of the EG algorithm, up to constant factors. Instead, if the scale of the losses is different, we still pay the scale of the best expert, instead of paying the biggest scale.

1. Tuning the Learning Rate using a Multi-scale Algorithm

We now show an interesting application of the multi-scale expert algorithm.

In our analysis of Online Subgradient Descent (OSD), we have seen that the choice of the learning rate is critical for performance. For instance, with a constant learning rate

, the optimal choice that minimizes the regret bound is

, which unfortunately depends on the competitor

and the entire sequence of future gradients. One might be tempted to use a grid of learning rates and select the best one in hindsight, but unfortunately this is not a valid online learning procedure.

In this section, we demonstrate how to use a multi-scale expert algorithm to design a meta-algorithm that automatically adapts to the best learning rate from a given set, paying only a small price in the regret. The core idea is to treat each instance of an online learning algorithm with a fixed learning rate as an “expert”. We then use a multi-scale expert algorithm to combine the predictions of these experts. The resulting ensemble algorithm will have a regret guarantee that is close to the regret of the best expert—and thus the best learning rate—in hindsight.

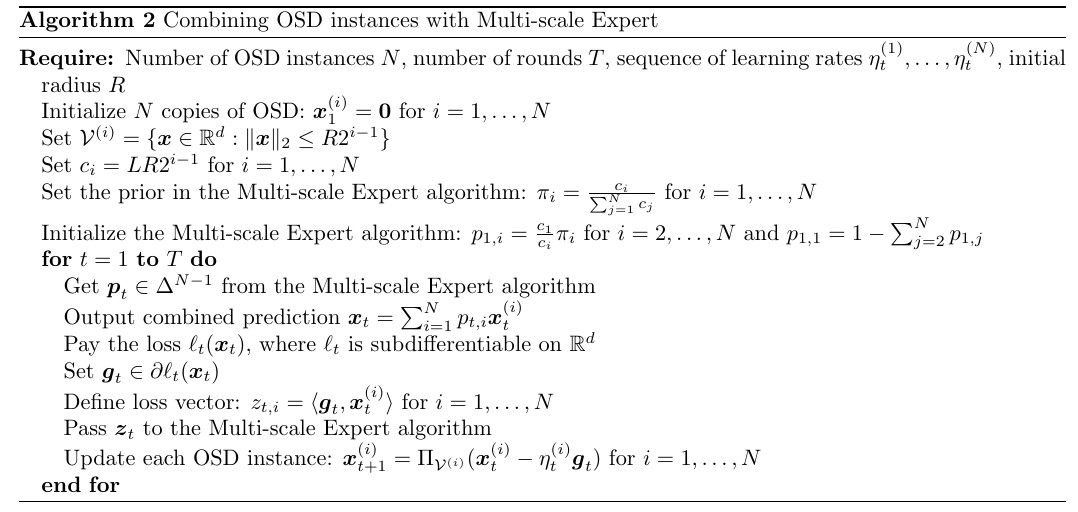

Let us consider running parallel instances of the OSD algorithm, each with a different learning rate

for

. At each round

, each OSD instance

produces a prediction

. We can view these

predictions as advice from

experts. Our goal is to combine them into a single prediction

that performs nearly as well as the best prediction

from the best OSD instance

.

A straightforward approach would be to compute the loss for each expert

and use this as the loss vector for the Multi-scale Expert algorithm. However, this would require computing

separate subgradients

at each round, which can be computationally expensive.

To create an efficient algorithm that requires only one subgradient evaluation per round, we can use the following linearization technique. The controller algorithm forms its combined prediction . We then receive a single subgradient

at this combined point. This single subgradient is then used to define a linear surrogate loss,

, which is passed to all expert algorithms and to the controller algorithm.

Because all experts receive the same linear loss function, they all use the same subgradient for their updates. The loss for the

-th expert, used by the multi-scale expert algorithm, is simply

. This procedure is summarized in Algorithm 2.

We can now prove a regret bound for this ensemble algorithm.

Theorem 2. Let

and fix

the number of rounds. With the notation in Algorithm 2, assume that the losses

are

-Lipschitz with respect to

. Let

be the set of learning rates for the OSD experts,

, and the learning rate of the Multi-scale Expert algorithm

. Then, Algorithm 2 satisfies

Proof: The choice of ensures that for any

such that

there exists an expert

such that

. Indeed, if

we take

, while otherwise we take

.

By the definition of subgradient, the regret of the ensemble algorithm can be bounded by the regret on the linearized losses:

We can now decompose the regret as

The second term is the regret of the best OSD expert on the sequence of linear losses . From the regret bound of OSD with constant learning rate

and initial point equal to zero, we have

For the first term, we have

This is the regret of the Multi-scale Expert algorithm against the expert on the sequence of loss vectors

where

for all

, where we used that

is

-Lipschitz and

has radius

. This is exactly the setting we used for the scales

. So, using the regret bound for the Multi-scale Expert algorithm, we get

Combining the bounds for both terms completes the proof.

This result shows that by combining OSD instances, we can achieve a regret that is close to the one obtained by the best learning rate in the chosen set. The constraint on is motivated by the fact that for larger competitors the regret would not be sublinear in

, even with prior knowledge of the norm of the competitor. Hence, it is impossible to compete with

that are too large. The additional multiplicative price for the adaptivity is

, that we know to be necessary by the lower bound for unconstrained online convex optimization.

This technique provides a principled method for automating the selection of learning rates in an online fashion. However, its interest is mostly theoretical: it shows that such adaptivity to is possible, but one should not expect strong empirical performance from this rather cumbersome procedure.

2. History Bits

The multi-scale setting was introduced independently (Dylan Foster, personal communication, 2026) and around the same time by Bubeck et al. (2017), Bubeck et al. (2019) and Foster et al. (2017). Bubeck et al. (2017), Bubeck et al. (2019) propose two algorithms, one for non-positive losses and one for generic losses. Unfortunately, their proof for the generic losses appears to be wrong and not easily fixable. Their bound is similar to the one we proved here. Foster et al. (2017) proved a stronger result, where the term in the logarithm depends on rather than on the sum of the scales and the minimal value. However, their algorithm has a computational complexity at step

of

. Cutkosky&Orabona (2018) proposed another solution that achieves the same bound of Foster et al. (2017), but with constant computational complexity.

The algorithm I describe here is a simplification of the one in Chen et al. (2021). The bound is worse than the one in Cutkosky&Orabona (2018) and Foster et al. (2017), but it is simpler to describe, and it also allowed me to describe the method of the shifted surrogate loss.

The use of shifted surrogate losses in learning with expert advice was proposed in Hazan and Kale (2008), Hazan&Kale (2010) and later used in Steinhardt&Liang (2014). Interestingly, these shifted surrogates can be understood as an approximation of the coin-betting instantaneous log wealth, as one can see by comparing the update of Squint (Koolen&van Erven, 2015) with the update of the KT-based parameter-free algorithm for learning with expert advice.

The idea of running multiple OSD algorithms and aggregating them with a Multi-scale Expert algorithm is from Foster et al. (2017), but they require function values per step, while we use only one subgradient per step using the linearization idea proposed in MetaGrad (van Erven&Koolen, 2016, van Erven et al., 2021). The idea of restricting the norm of the competitor to the “meaningful ones” is not necessary in the original formulation by Foster et al. (2017), but it is standard, and it has been rediscovered many times in different forms, e.g., in a method privately proposed by Nemirovski in 2013 and described in the ICML 2020 tutorial on “Parameter-free Online Optimization”, in Orabona (2014, Theorem 2), in Cutkosky (2019, Section 5).