While preparing the post on faster rates for saddle-point optimization with optimistic Online Convex Optimization algorithms, I realized I never explained Optimistic Online Mirror Descent (OMD). So, here it is: Optimistic OMD!

The post will be short: the algorithm is straightforward, especially after knowing Optimistic Follow-The-Regularized-Leader (FTRL), and the proof is the simplest one I know. Yet, there are few interesting bits that one can extract from this short proof.

1. Optimistic OMD

I have already discussed the Optimistic version of FTRL and show how the proof is immediate once we change the regularizers. By immediate, I mean that it is just the FTRL regret equality and a telescopic sum over the hints. Here, I’ll show that a regret bound for Optimistic OMD can be proven in the exact same way.

I always found previous proofs of optimistic OMD unnecessarily complex. Instead, here we show that the proof is just the usual OMD proof applied to a different sequence of subgradients and a telescopic sum: easy peasy! I want to stress that this is not a “trick”, but the very essence of the Optimistic OMD algorithm.

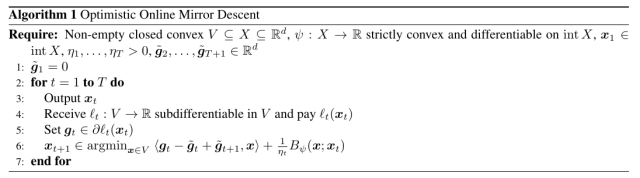

First, let’s introduce the Optimistic OMD algorithm, see Algorithm 1. Here, at round the algorithm receives a hint

on the next subgradient

and uses it to construct the update. At the same time, you have to remove the hint you used at the previous time step,

. (Note that this is the more recent one-update-per-step variant of Optimistic OMD, rather than the original two-updates-per-step Optimistic OMD, see the History Bits.)

To gain some intuition on why this update makes sense, consider the case that ,

, and

. In this case,

. Unrolling the update, we get

. Without hints, that is in plain OMD, under the same assumptions the unrolled update would be

and

. Hence,

acts as a proxy for the next (unknown) subgradient

.

Note that one might be tempted to multiply by

, because in the previous iteration we used the learning rate

. However, the online learning proof reveals that the correct way to see the update is to think the learning rate as attached to the Bregman divergence rather than to the subgradients. (Things might be different in the batch and stochastic setting, where the best proofs deal with the learning rates in a slightly different way.)

One might also be tempted to find a way to study this algorithm with a special proof. However, the one-step lemma we proved for OMD is essentially tight: we only used two inequalities, one to deal with the set and the other one to linearize the losses. But, but both steps can be made tight, considering

and linear losses. Hence, if the update is just OMD with a different sequence of subgradients, the proof must follow from the one of OMD with a different set of subgradients. This is a general rule: If we have a theorem based on a tight inequality, any other proof of the same theorem, no matter how complex, must be looser or in the best case equivalent. On the other hand, some (fake) complexity might help you with some reviewers, but this is another story… 😉

Theorem 1. Let

the Bregman divergence w.r.t.

and assume

to be proper, closed, and

-strongly convex with respect to

in

. Let

a non-empty closed convex set. With the notation in Algorithm 1, assume

exists, and it is in

.

Assume. Then, and

, the following regret bounds hold

Moreover, if

is constant, i.e.,

, we have

Proof: The proof closely follows the one of Lemma 4 in the OMD proof with , so we only show the different steps. First of all, changing the sequence of subgradients, we immediately have

Summing over the l.h.s., we obtain

Summing the last terms on the r.h.s., we have that

Finally, observe that

Given that loss on the rounds does not depend on

we can safely set it to

. Putting all together, we have the stated bounds.

To obtain the second inequalities, we use the strong convexity of as in the proof of OMD.

These regret bounds are essentially the same of the ones of Optimistic FTRL, minus the intrinsic differences between FTRL and OMD. In particular, the constant factors are also the same. Hence, similar results to the ones that we proved for Optimistic FTRL can be proved for Optimistic OMD.

As said above, we will use this result to show how to accelerate the optimization of smooth saddle-point problems.

2. History Bits

The original Optimistic OMD was proposed in Rakhlin and Sridharan (2013) with two-updates-per-step. Later, Joulani et al. (2017) showed that the same bounds could be obtained with a simpler one-update-per-step version of Optimistic OMD, that is the version I describe here. The proof I present here is based on the one I proposed for Optimistic FTRL.