In this post, we will see some connections between saddle-point problems and Game Theory, as well as with Boosting.

Warning: this post heavily needs the knowledge of the min-max problems. So, I strongly suggest to read the previous one before, if you do not remember it.

1. Game-Theory interpretation of Saddle-Point Problems

An instantiation of a saddle-point problems also has an interpretation in Game Theory as a Two-player Zero-Sum Game. Note that Game Theory is a vast field and two-person zero-sum games are only a very small subset of the problems in this domain and what I describe here is an even smaller subset of this subset of problems.

Game theory studies what happens when self-interested agents interact. By self-interested, we mean that each agent has an ideal state of things he wants to reach, that can include a description of what should happen to other agents as well, and he works towards this goal. In two-person games the players act simultaneously and then they receive their losses. In particular, the -player chooses the play

and the

-player chooses the play

, the

-player suffers the loss

and the

-player the loss

. It is important to understand that this is only one round, that is it has only one play for each player. Note that the standard game-theoretic terminology uses payoffs instead of losses, but we will keep using losses for coherence with the online convex optimization notation that I use on this blog.

We consider the so-called two-person normal-form games, that is when the first player has possible actions and the second player

. A player can use a pure strategy, that is a single fixed action, or randomize over a set of actions according to some probability distribution, a so-called mixed strategy. In this case, we consider

and

and they are known as the action spaces for the two players. In this setting, for a pair of pure strategies

, the first player receives the loss

and the second player

, where

is the vector with all zeros but a ‘1’ in position

. The goal of each player is to minimize the received loss. Given the discrete nature of this game, the function

is the bilinear function

, where

is a matrix with

rows and

columns. Hence, for a pair of mixed strategy

, the expected loss of the first player is

and the one of the second player is

.

A fundamental concept in game theory is the one of Nash Equilibrium. We have a Nash equilibrium if all players are playing their best strategy to the other players’ strategies. That is, none of the players has incentive to change their strategy if the other player does not change it. For the zero-sum two-person game, this can be formalized saying that is a Nash equilibrium if

This is exactly the definition of saddle-point for the function that we gave last time. Given that

is continuous, convex in the first argument and concave in the second one, the sets

and

are convex and compacts, we can deduce from Theorem 5 and Theorem 3 from my previous post that a saddle-point always exists. Hence, there is always at least one (possibly mixed) Nash equilibrium in two-person zero-sum games. The common value of the minimax and maxmin problem is called value of the game and we will denote it by

.

For zero-sum two-person game the Nash equilibrium has an immediate interpretation: From the definiton above, if the first player uses the strategy then his loss is less then the value of the game

, regardless of the strategy of the second player. Analogously, if the second player uses the strategy

then his loss is less then

, regardless of the strategy of the first player. Both players achieve the value of the game if they both play the Nash strategy. Moreover, even if one of the player would announce his strategy in advance to the other player, he would not increase his loss in expectation.

Example 1 (Cake cutting). Suppose to have a game between two people: The first player cuts the cake in two and the second one chooses a piece; the first player receives the piece that was not chosen. We can formalize it with the following matrix

larger piece smaller piece cut evenly 0 0 cut unevenly 10 -10 When the first player plays action

and the second player action

, the first player receives the loss

and the second player receives

. The losses represent how much less in percentage compared to half of the cake the first player is receiving. The second player receives the negative of the same number. It should be easy to convince oneself that the strategy pair is

is an equilibrium with value of the game of 0.

Example 2 (Rock-paper-scissors). Let’s consider the game of Rock-paper-scissors. We describe it with the following matrix

Rock Paper Scissors Rock 0 1 -1 Paper -1 0 1 Scissors 1 -1 0 It should be immediate to realize that there are no pure Nash equilibria for this game. However, there is a mixed Nash equilibrium when each player randomize the action with a uniform probability distribution and value of the game equal to 0.

Example 3 (Matching Pennies). In this game, both players show a face of a penny. If the two faces are the same, the first player wins both, otherwise the second player wins both. The associated matrix

is

head tail head -1 1 tail 1 -1 It is easy to see that the Nash equilibrium is when both players randomize the face to show with equal probability.

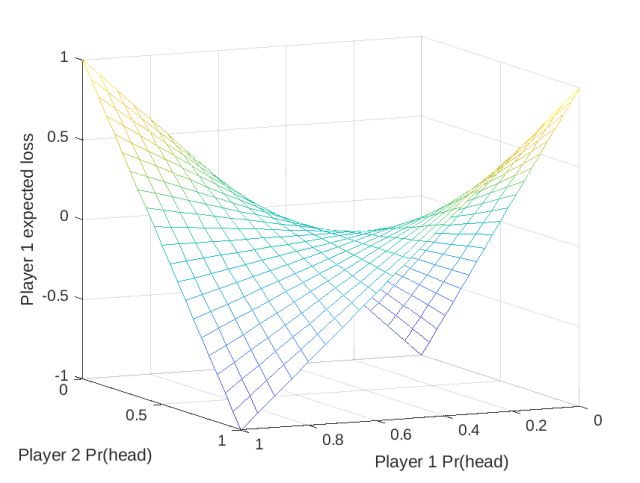

In this simple case, we can visualize the saddle point associated to this problem in Figure 1

Unless the game is very small, we find Nash equilibria using numerical procedures that typically give us only approximate solutions. Hence, as for -saddle points, we also define an

-Nash equilibrium for a zero-sum two-person game when

and

satisfy

Obviously, any Nash equilibrium is also an -Nash equilibrium.

From what we said last time, it should be immediate to see how to numerically calculate the Nash equilibrium of a two-person zero-sum game. In fact, we know that we can use online convex optimization algorithms to find -saddle points, so we can do the same for

-Nash equilibrium of two-person zero-sum games. Assuming that the average regret of both players is

, the theorem we saw last time says that

is a

– Nash equilibrium.

2. Boosting as a Two-Person Game

We will now show that the Boosting problem can also be seen as the solution of a zero-sum two-person game. In fact, this is my favorite explanation of Boosting because it focuses on the problem rather than on a specific algorithm.

Let a training set of

couples features/labels, where

and

. Let

a set of

functions

.

The aim of boosting is to find a combination of the functions in that has arbitrarily low misclassification error on

. Of course, this is not always possible. However, we will make a (strong) assumption: It is always possible to find a function

such that its misclassification error over

weighted with any probability distribution is better than chance by a constant

. We now show that this assumption guarantees that the boosting problem is solvable!

First, we construct a matrix of the misclassifications for each function: where

. Setting

and

, we write the saddle-point problem/two-person zero-sum game

Given the definition of the matrix , this is equivalent to

Let’s now formalize the assumption on the functions: We assume the existence of a weak learning oracle that for any , the oracle returns

such that

where . In words,

is the index of the function in

that gives a

-weighted error better than chance. Moreover, given that

and

we have that the value of the game satisfies . Using the inequality on the value of the game and the fact that the Nash equilibrium exists, we obtain that there exists

such that for any

In words, this means that every sample is misclassified by less than half of the functions

when weighted by

. Hence, we can correctly classify all the samples using a weighted majority vote rule where the weights over the function

are

. This means that we can learn a perfect classifier rule using weak learners, through the solution of a minimax game. So, our job is to find a way to calculate this optimal distribution

on the functions.

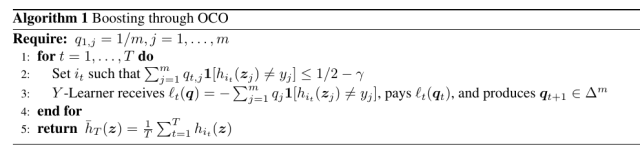

Given what we have said till now, a natural strategy is to use online convex optimization algorithms. In particular, we can use Algorithm 3 from my previous post, where in each round the -player is the weak learning oracle, that knows the play

by the

-Learner, while the

-player is an Online Convex Optimization (OCO) algorithm. Specialized to our setting we have the following algorithm. In words, the

-player looks for the function that has small enough weighted misclassification loss, where the weights are constructed by the

-player.

Let’s show a guarantee on the misclassification error of this algorithm. From the second equality of Theorem 6 in my previous post, for any , we have

From the assumption on the weak-learnability oracle, we have . Moreover, choosing

we have

. Putting all together, for any

, we have

If , less than half of the functions selected by the boosting procedure will make a mistake on

. Given that the predictor is a majority rule, this means that the majority rule will make 0 mistakes on the training samples.

In this scheme, we construct by approximating it with the frequency with which

is equal to

.

Let’s now instantiate this framework with a specific OCO algorithm. For example, using Exponentiated Gradient (EG) as algorithm for the -player, we have that

for any

, that implies that after

rounds the training error is exactly 0. This is exactly the same guarantee achieved by AdaBoost (Freund and Schapire, 1997).

Boosting and Margins What happens if we keep boosting after the training error reaches 0? It turns out we maximize the margin, defined as . In fact, given that

, we have for any

Hence, when the number of rounds goes to infinity the minimum margin on the training samples reaches . This property of boosting of maximizing the margin has been used as an explanation of the fact that in boosting additional rounds after the training error reaches 0 often keep improving the test error on test samples coming i.i.d. from the same distribution that generated the training samples.

The above reduction does not tell us how the training error precisely behaves. However, we can get this information changing the learning with expert algorithm. Indeed, if you read my old posts you should know that there are learning with experts algorithm provably better than EG. We can use a learning with experts algorithm that guarantees a regret for a given prior

, where

is the uniform prior. This kind of algorithms allow us to upper bound the fraction of mistakes after any

iterations. Denote by

the number of misclassified samples after

iterations of boosting and set

as the vector whose coordinates are equal to

if

misclassifies it. Hence, we have

Using the expression of the regret, we have that the fraction of misclassification errors is bounded by

. That is, fraction of misclassification error goes to zero exponential fast in the number of boosting rounds. Again, a similar guarantee was proved for AdaBoost, but here we quickly derived it using a reduction from boosting to learning with experts, passing through two-person games.

3. History Bits

The reduction from boosting to two-person game and learning with expert is from Freund and Schapire (1996). It seems that the question if a weak learner can be boosted into a strong learner was originally posed by Kearns and Valiant (1988) (see also Kearns (1988)) but I could not verify this claim because I could not find their technical report anywhere. It was answered in the positive by Schapire (1990). The AdaBoost algorithm is from Freund and Schapire (1997). The idea of using algorithms that guarantee a KL regret bound in (2) is from Luo and Schapire (2014).

Acknowledgements

Thanks to Nicolò Campolongo for hunting down my typos.

Very interesting and comprehensive.

LikeLiked by 1 person