12/4/2021: In the previous version of this post there were some incorrect details that didn’t allow me to sleep well at night. So, I rewrote it in the correct formalism, but please let me know if you see mistakes.

In this post, we talk about solving saddle-point problems with online convex optimization (OCO) algorithms. Next time, I’ll show the connection to two-person zero-sum games and boosting.

1. Saddle-Point Problems

We want to solve the following saddle-point problem

Let’s say from the beginning that we need inf and sup rather than min and max because the minimum or maximum might not exist. Everytime we know for sure the inf/sup are attained, I’ll substitute them with min/max.

While for the minimization of functions is clear what it means to solve it, it might not be immediate to see what is the meaning of “solving” the saddle-point problem in (1). It turns out that the proper notion we are looking for is the one of saddle-point.

Definition 1. Let

,

, and

. A point

is a saddle-point of

in

if

We will now state conditions under which there exists a saddle-point that solves (1). First, we need an easy lemma.

Lemma 2. Let

is any function from a non-empty product set

to

. Then,

Proof: For any and

we have that

. This implies that

for all

that gives the stated inequality.

We can now state the following theorem.

Theorem 3. Let

any function from a non-empty product set

to

. A point

is a saddle-point of

if and only if the supremum in

is attained at

, the infimum in

is attained at

, and these two expressions are equal.

Proof: If is a saddle-point, then we have

From Lemma 2, we have that these quantities must be equal, so that the three conditions in the theorem are satisfied.

For the other direction, if the conditions are satisfied, then we have

Hence, is a saddle-point.

Remark 1. The above theorem tells a surprising thing: If a saddle-point exists, then there might be multiple ones, and all of them must have the same minimax value. This might seem surprising, but it is due to the fact that the definition of saddle-point is a global and not a local property. Moreover, if the

and

problem have different values, no saddle-point exists.

Remark 2. Consider the case that the value of the

and

are different. If your favorite optimization procedure to find the solution of a saddle-point problem does not distinguish between a

and

formulation, then it cannot be correct!

Let’s show a couple of examples that show that the conditions above are indeed necessary.

Example 1. Let

,

, and

. Then, we have

while

Indeed, from Figure 1 we can see that there is no saddle-point.

Example 2. Let

,

, and

. Then, we have

and

Here, even if inf sup is equal to sup inf, the saddle-point does not exist because the inf in the first expression is not attained in a point of

.

This theorem also tells us that in order to find a saddle-point of a function , we need to find the minimizer in

of

and the maximizer in

of

. Let’s now use this knowledge to design a proper measure of progress towards the saddle-point.

We might be tempted to use as a measure of suboptimality of

with respect to the saddle-point

. Unfortunately, this quantity can be negative or equal to zero for an infinite number of points

that are not saddle-points. We might then think to use some notion of distance to the saddle-point, like

, but this quantity in general can go to zero at an arbitrarily slow rate. To see why consider the case that

, so that the saddle-point problem reduces to minimize a convex function. So, assuming only convexity, the rate of convergence to a minimizer of

can be arbitrarily slow. Hence, we need something different.

Observe that the Theorem 3 says one of the problems we should solve is

where . In this view, the problem looks like a standard convex optimization problem, where the objective function has a particular structure. Moreover, in this view we only focus on the variables

. The standard measure of convergence in this case for a point

, the suboptimality gap, can be written as

We also have to find the maximizer in of

, hence we have

where . This also implies another measure of convergence in which we focus only on the variable

:

Finally, in case we are interested in studying the quality of a joint solution , a natural measure is a sum of the two measures above:

where we assumed the existence of a saddle-point to say that from Theorem 3. This measure is called duality gap. From the definition it should be clear that the duality gap is always non-negative. Let’s add even more intuition of the duality gap definition, using the one of

-saddle-point.

Definition 4. Let

,

, and

. A point

is a

-saddle-point of

in

if

This definition is useful because we cannot expect to numerically calculate a saddle-point with infinite precision, but we can be find something that satisfies the saddle-point definition up to a . Obviously, any saddle-point is also an

-saddle-point.

Now, the notion of -saddle-point is equivalent up to a multiplicative constant to the

duality gap. In fact, it is easy to see that if

is an

-saddle-point then its duality gap of

. In turn, a duality gap of

and the existence of a saddle-point imply that the point is a

-saddle.

The above reasoning told us that finding the saddle-point of the function is equivalent to solving a maximization problem and a minimization problem. However, is it always the case that the saddle-point exists? So, let’s now move to easily checkable sufficient conditions for the existence of saddle-point. For this, we can state the following theorem.

Theorem 5. Let

,

be compact convex subsets of

and

respectively. Let

a continuous function, convex in its first argument, and concave in its second. Then, we have that

This theorem gives us sufficient conditions to have the min-max problem equal to the max-min one. So, for example, thanks to the Weierstrass theorem, the assumptions in Theorem 5, in light of Theorem 3, are sufficient conditions for the existence of a saddle-point.

We defer the proof of this theorem for a bit and we now turn ways to solve the saddle-point problem in (1).

2. Solving Saddle-Point Problems with OCO

Let’s show how to use Online Convex Optimization (OCO) algorithms to solve saddle-point problems.

Suppose to use an OCO algorithm fed with losses that produces the iterates

and another OCO algorithm fed with losses

that produces the iterates

. Then, we can state the following Theorem.

Theorem 6. Let

be convex in the first argument and concave in the second. Then, with the notation in Algorithm 2, for any

, we have

where

.

For any

, we have

where

.

Also, if

is continuous and

,

compact, we have

for any

and

.

Proof: The first two equalities are obtained simply observing that and

.

For the inequality, using Jensen’s inequality, we obtain

Summing the first two equalities, using the above inequality, and taking and

, we get the stated inequality.

From this theorem, we can immediately prove the following corollary.

Corollary 7. Let

be continuous, convex in the first argument, and concave in the second. Assume that

and

are compact and that the

-player and

-player use no-regret algorithms, possibly different, than

Example 3. Consider the saddle-point problem

The saddle-point of this problem is

. We can find it using, for example, Projected Online Gradient Descent. So, setting

, we have the iterations

According to Theorem 6, the duality gap in

converges to 0.

Surprisingly, we can even prove a (simpler version of the) minimax theorem from the above result! In particular, we will use the additional assumption that there exist OCO algorithms that minimize and

have sublinear regret.

Proof with OCO assumption: From Lemma 2, we have one inequality. Hence, we now have to prove the other inequality.

We will use a constructive proof. Let’s use Algorithm 2 and Theorem 6. For the first player, for any we have

Observe that

Hence, using an OCO algorithm that has regret for each

, we have

In the same way, we have

Summing the two inequalities, taking , and using the sublinear regret assumption, we have

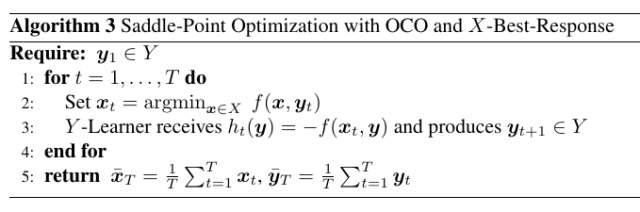

2.1. Variations with Best Response and Alternation

In some cases, it is easy to compute the max with respect to of

for a given

. For example, this is trivial for bilinear games over the simplex. In these cases, we can remove the second learner and just use its best response in each round. Note that in this way we are making one of the two players “stronger” through the knowledge of the loss of the next round. However, this is perfectly fine: The proof in Theorem 6 is still perfectly valid.

In this case, the -player has an easy life: it knows the loss before making the prediction, hence it can just output the minimizer of the loss in

. Hence, we also have that the regret of the

-player will be non-positive and it will not show up in Theorem 6. Putting all together, we can state the following corollary.

Corollary 8. Let

be continuous, convex in the first argument, and concave in the second. Assume

compact and that the argmax of the

-player is never empty. Then, with the notation in Algorithm 2, we have

for any

and where

.

This alternative seems interesting from a theoretical point of view because it allows to avoid the complexity of learning in the space, for example removing the dependency on its dimension. Of course, an analogous result can be stated using best-response for the

-player and an OCO algorithm for the

-player.

There is a third variant, very used in empirical implementations, especially of Counterfactual Regret Minimization (CFR) (Zinkevich et al., 2007). It is called alternation and it breaks the simultaneous reveal of the actions of the two players. Instead, we use the updated prediction of the first player to construct the loss of the second player. Empirically, this variant seems to greatly speed-up the convergence of the duality gap.

For this version, Theorem 6 does not hold anymore because the terms and

are now different, however we can prove a similar guarantee.

Theorem 9. Let

continuous, convex in the first argument, and concave in the second. Assume that

and

are compact. Then, with the notation in Algorithm 4, for any

and any

we have

where

and

.

Proof: Note that , and

. By Jensen’s inequality, we have

Taking and

, we get the stated result.

Remark 3. In the case that

is linear in the first argument, using OMD for the

-player we have that

. Hence, in this case the additional term in Theorem 9 is negative, showing a (marginal) improvement to the convergence rate.

Next post, we will show how to connect saddle-point problems with Game Theory.

3. History Bits

Theorem 3 is (Rockafellar, 1970, Lemma 36.2).

The proof of Theorem 6 is from (Liu and Orabona, 2021) and it is a minor variation on the one in (Abernethy and Wang, 2017): (Liu and Orabona, 2021) stressed the dependency of the regret on a competitor that can be useful for refined bounds, as we will show next time for Boosting. It is my understanding that different variant of this theorem are known in the game theory community as “Folk Theorems”, because such result was widely known among game theorists in the 1950s, even though no one had published it.

The celebrated minimax theorem for zero-sum two-person games was first discovered by John von Neumann in the 1920s (von Neumann, 1928)(von Neumann and Morgenstern, 1944). The version is state here is a simplification of the generalization due to (Sion, 1958). The proof here is from (Abernethy and Wang, 2017). A similar proof is in (Cesa-Bianchi and Lugosi, 2006) based on a discretization of the space that in turn is based on the one in (Freund, Y. and Schapire, 1996)(Freund, Y. and Schapire, 1999).

Algorithms 2 and 3 are a generalization of the algorithm for boosting in (Freund and Schapire, 1996)(Freund and Schapire, 1999). Algorithm 2 was also used in (Abernethy and Wang, 2017) to recover variants of the Frank-Wolfe algorithm (Frank and Wolfe, 1956).

It is not clear who invented alternation. Christian Kroer told me that it was a known trick used in implementations of CFR for the computer poker competition from 2010 or so. Note that in CFR the method of choice is Regret Matching (Hart and Mas-Colell, 2000). However, (Kroer, 2020) empirically shows that alternation improves a lot even OGD for solving bilinear games. (Tammelin et al., 2015) explicitly include this trick in their implementation of an improved version of CFR called CFR+, claiming that it would still guarantee convergence. However, (Farina et al., 2019) pointed out that averaging of the iterates in alternation might not produce a solution to the min-max problem, providing a counterexample. Theorem 9 is from (Burch et al., 2019) (see also (Kroer, 2020)).

There is also a complementary view on alternation: (Zhang et al., 2021) link alternating updates to Gauss-Seidel methods in numerical linear algebra, in contrast to the simultaneous updates of the Jacobi method. Also, they provide a good review of the optimization literature on the advantages of alternation, but this paper and the papers they cite do not seem to be aware of the use of alternation in CFR.

Acknowledgements

Thanks to Christian Kroer for the history of alternation in CFR, and to Gergely Neu for the references on alternation in the optimization community. Thanks to Nicolò Campolongo for hunting down my typos. Also, thanks to Christian Kroer for his great lecture notes that helped me getting started on this topic.