In this post, we talk about implicit updates in the online convex optimization framework (reminder: online stochastic). Instead, if you are interested in implicit updates in offline and stochastic optimization, I strongly suggest to read the amazing series of blogposts by Alex Shtof.

1. Implicit Updates

Let’s consider the two mostly commonly used frameworks for online learning: Online Mirror Descent (OMD) and Follow-The-Regularized-Leader (FTRL).

We already explained that in OMD we update the iterate as the minimizer of an linear approximation of the last loss function we received plus a term that measures the distance to the previous iterate:

where .

On the other hand, in FTRL we have two possibilities: we can minimize the regularized sum of the loss we have received till now or the regularized sum of the linear approximation of the losses. In the first case, we update with

while in the second case, we use

where for

. The second update is what optimization people call Dual Averaging. We also saw that under some reasonable conditions, the two updates of FTRL have the same regret guarantee. However, we would expect the second approach, the one using the exact loss functions, to perform much better in practice. Also, in the linearization of the losses we have to assume to know some characteristics of the losses, e.g., strong convexity parameter, to achieve the same regret of the full-losses FTRL.

Overall, we have two different frameworks and two different ways to use the loss functions in the update. So, it should be obvious that there is at least another possibility, that is OMD with exact loss. That is, we would like to consider the update

As in the FTRL case, we would expect this update to be better than the linearized one, at least empirically.

To gain some more intuition, let’s consider the simple case that ,

, and the losses

are differentiable. In this case, we have that the linearized OMD update becomes

For example, with square loss and linear predictors over couples input/labels , we have

and the update becomes

On the other hand, the update of OMD with the exact loss function becomes

The optimality condition tells us that satisfies

that is

So, the update is not in a closed formula anymore, but it has an implicit form, where appears in both side of the equality. This is exactly the reason why the update of OMD with exact losses is known in the online learning literature as implicit updates. So, we will call the update in (1) Implicit OMD.

Remark 1. Observe that for linear losses OMD and the Implicit OMD are equivalent.

In general, calculating the update of Implicit OMD can be an annoying optimization problem. However, in some cases the Implicit OMD update can still be solved in a closed form.

Example 1. Consider again linear regression with square loss. The update of Implicit OMD becomes

To solve the equation, we take the inner product of both sides with

, to obtain

that is

Substituting this expression in (3), we have

Implicit Updates are Always Descending Till now, we have motivated implicit updates purely from an intuitive point of view: We expect this algorithm to be better because we do not approximate the loss functions. Indeed, we often gain a lot in performance switching to implicit updates. However, we can even prove that implicit updates have interesting theoretical properties.

First, contrarily to OGD, implicit updates remains “sane” even when the learning rate goes to infinity. Indeed, taking to infinity in (1),

becomes simply the minimizer of the loss function. On the other hand, in OMD when the learning rate goes to infinity we can take a step that is arbitrarily far from the minimizer of the function!

When we consider non-differentiable convex functions, there is another important difference between implicit updates and subgradient descent updates. We already saw that the subgradient might not point in a descent direction. That is, no matter how we choose , the value of the function in

might be higher than in

. On the other hand, this cannot happen with implicit updates, no matter how we choose the learning rate:

Proximal updates Are implicit updates actually an invention of online learning people? Actually, no. Indeed, these kind of updates were known at least in 1965 (!) and they were proposed for the (offline) optimization of functions with the name of proximal updates. Basically, we have a function and we minimize it iteratively with the update

starting from an initial point . At first sight, such update might seem pointless in offline optimization: being able to solve (4) implies being also able to find the minimizer of

in one step! However, as we have previously discussed, these kind of updates find an application when the function

is composed by two parts and we decide to linearize only one part.

2. Passive-Aggressive

Now, let me show you that implicit updates were actually used a lot in the online literature, even if many people did not realize it.

Let’s take a look at a very famous online learning algorithm: the Passive-Aggressive (PA) algorithm. PA was a major success in online learning: 2099 citations and counting, that is huge for the online learning area. The theory was not very strong, but the performance of these algorithms was way better than anything else we had at that time. Let’s see how the PA algorithm works.

The PA algorithm was introduced before the Online Convex Optimization (OCO) framework was proposed. So, at that time, online learning for classification and regression focused on the particular case in which the loss functions have the form , basically the loss of linear predictors over couples input/label

. The PA algorithm in particular focused on losses that can be zero over intervals, like the hinge loss, the squared hinge loss, the

-insensitive loss, and the squared

-insensitive loss. For these losses, the update they proposed was

where is a hyperparameter. Now, this is exactly the Implicit OMD update with the special case of the squared L2 Bregman divergence! The choice of the loss functions makes this update always in a closed form. So, for example, for the hinge loss and linear predictors, we have

where the second equality is calculated using the optimality condition and it is left as an exercise to the reader.

So, the huge boost in performance of PA over other online algorithm is due uniquely to the implicit updates.

3. Implicit Updates on Truncated Linear Models: aProx

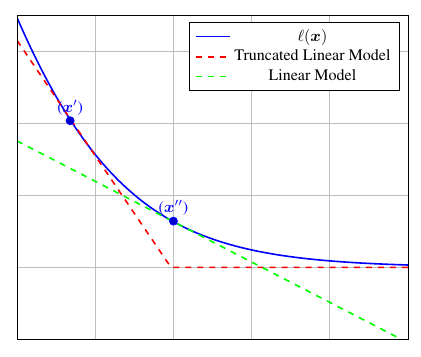

There is another first-order optimization algorithm inspired to implicit updates. As we said, implicit updates are rarely in a closed form. So, we can try to approximate the implicit updates in some way. One possibility is to use the implicit update on a surrogate loss function. Indeed, when we use a linear approximation we recover plain OMD. Instead, when we use the exact function we get the implicit updates. What can we use in between the two cases? We could think to use a truncated linear model. That is, in the case we know that the functions are lower bounded by some , we define

for any . Note that this is a lower bound to the loss function

and it is piecewise linear.

Now, we can use these surrogate function in the implicit OMD:

Implicit OMD with truncated linear models and the squared L2 Bregman is called aProx (Asi and Duchi, 2019).

Considering and

, we again have

where is a specific vector in

. Now, we have 2 possibilities:

is in the flat part or in the corner of

. Indeed, it should be easy to see that the proximal update assures us that we cannot miss the corner and land on the flat part. So, if we are in the linear part, then

. Instead, if we are in the corner we have

where

. Hence, we always have

and we only need to find . Substituting

in the definition of

and using first-order optimality condition, we can verify that the following is the closed formula of the update (left as an exercise)

The similarity between this update and the one of PA in (5) should be adamant, that is due to the similarity between the truncated linear model and the hinge loss. Indeed, running aProx on linear classifiers with hinge loss is exactly the PA algorithm.

4. More Updates Similar to the Implicit Updates

From an empirical point of view, we can gain a lot of performance using implicit updates, even just approximating them. So, it should not be surprising if people proposed and used similar ideas in many optimization algorithm. Let me give you some examples.

The default optimization algorithm in the machine learning library Vowpal Wabbit (VW) uses the Importance Weight Aware Updates (Karampatziakis and Langford, 2011). These updates essentially approximate the implicit update using a differential equation that for linear models can be calculated in a closed formula. So, if you ever used VW, you already used a close relative of implicit updates, probably without knowing it.

Another interesting example is the setting of adaptive filtering, where one wants to minimize . In this setting, a classic algorithm is Least Mean Squared (LMS) algorithm that corresponds to Online Gradient Descent with linear models and squared loss. Now, a known better version of the LMS is the normalized LMS, that is nothing else that Implicit OMD with linear models and squared loss.

There are even interpretations of the Nesterov’s accelerated gradient method as an implicit update on a curved space (Defazio, 2019).

So, implicit updates are so “natural” that I personally think that any offline/online optimization algorithm that has good performance must be a good approximation of implict updates. Hence, I am sure there are even more examples of implicit updates hiding in other well-known algorithms.

5. Regret Guarantee for Implicit Updates

From the above reasoning, it seems very intuitive to expect a better regret bound for implicit updates. However, it turns out particularly challenging to prove a quantifiable advantage of implicit updates over OMD ones in the adversarial setting.

Here, I show a very recent result of mine on Implicit OMD that for the first time shows a clear advantage of Implicit OMD in some situations.

First, we can show the following theorem.

Theorem 1. Assume a constant learning rate

. Then, implicit OMD guarantees

Moreover, assume the distance generating function to be 1-strongly convex w.r.t.

. Then, there exists

such that we have

Proof: To obtain this bound, we proceed in a slightly different way than in the classic OMD proof. In particular, for any we have

where and in the second inequality we have used the optimality condition of the update. Adding

to both terms of the inequality, dividing by

, and reordering, we have

Summing over time, we get the first bound.

For the second bound, let’s now focus on the terms and we upper bound them in two different ways. First, using the convexity of the losses, we can bound the difference between

and

:

where . Also, from the strong convexity of

, we have

Hence, putting all together we have

where in the last inequality we used the elementary inequality .

From the optimality condition of the implicit OMD update, we know that there exists such that

Hence, we have

where we used the convexity of the Bregman divergence in its first argument in the second inequality and the optimality condition of the update in the third inequality. This chain of inequalities implies that that gives the second bound in the minimum.

The theorem shows a possible and small improvement over the OMD regret bound. In particular, there might be sequences of losses where . The fact that the improvement is only possible on some sequences is to be expected: the OMD regret is worst-case optimal on bounded domains, so there is not much to gain. However, maybe we could expect a larger gain on some particular sequence of functions. Indeed, we can show that on some sequences of losses we can achieve constant regret! Let’s see how.

From the regret above, we have

Denoting by the temporal variability of the losses, we have that the regret guarantee is

Now, in the case that the loss functions are all the same and the regret upper bound becomes a constant independent of

. It is worth reminding that constant regret is the best we can hope for in online convex optimization! In other words, when online learning becomes as easy as offline learning (i.e., all the losses are equal), that implicit updates give us a provable large boost.

However, there is a caveat: In order to get a regret in the general case we need

. On the other had, if

we want

. The problem in online learning learning is that we do not know the future, so we need some adaptive strategy that changes

in a dynamic way. This is indeed possible and we leave this as an exercise, see below.

Our last observation is that we can recover the constant regret bound even for FTRL when used on the exact losses. Again, this is due to the use of the exact losses rather than the linear approximation. Remember that FTRL predicts with , where

. Hence, from the FTRL regret equality and assuming a non-decreasing regularizer, we have

However, FTRL with exact losses requires to solve a finite sum optimization problem whose size grows with the number of iterations. Instead, Implicit OMD uses only one loss in each round, resulting in a closed formula in a number of interesting cases. We also note that we would have the same tuning problem as before: in order to get a constant regret when , we would need the regularizer to be constant and independent from time, while it should grow as

in the general case.

6. History Bits

The implicit updates in online learning were proposed for the first time by (Kivinen and Warmuth, 1997). However, such update with the Euclidean divergence is the Proximal update in the optimization literature dating back at least to 1965 (Moreau, 1965)(Martinet, 1970)(Rockafellar, 1976)(Parikh and Boyd, 2014), and more recently used even in the stochastic setting (Toulis and Airoldi, 2017)(Asi and Duchi, 2019).

The PA algorithms were proposed in (Crammer et al., 2006), but the connection with implicit updates was absent in the paper. I am not sure who first realized the connection: I realized it in 2011 and I showed it to Joseph Keshet (one of the author of PA) that encouraged me to publish it somewhere. Only 10 years later, I am doing it 🙂 Note that the mistake bound proved in the PA paper is worse than the Perceptron bound. Later, we proved a mistake bound for PA that is strictly better than the classic Perceptron’s bound (Jie et al., 2010).

The very nice idea of truncated linear models was proposed by (Asi and Duchi, 2019) as a way to approximate proximal updates and retaining closed form updates.

The connection between implicit OMD and normalized LMS was shown by (Kivinen et al., 2006).

(Kulis and Bartlett, 2010) provide the first regret bounds for implicit updates that match those of OMD, while (McMahan, 2010) makes the first attempt to quantify the advantage of the implicit updates in the regret bound. Finally, (Song et al., 2018) generalize the results in (McMahan, 2010) to Bregman divergences and strongly convex functions, and quantify the gain differently in the regret bound. Note that in (McMahan, 2010)(Song et al., 2018) the gain cannot be exactly quantified, providing just a non-negative data-dependent quantity subtracted to the regret bound. The connection between temporal variation and implicit updates was shown in (Campolongo and Orabona, 2020), together with a matching lower bound.

7. Acknowledgements

Thanks to Nicolò Campolongo for feedback on a draft of this post.

8. Exercises

Exercise 1. Prove that the update of PA given above is correct.

Exercise 2. Prove that the update of aProx given above is correct.

Exercise 3. Find an learning rate strategy to adapt to the value of

without knowing it (Campolongo and Orabona, 2020).

Is the \eta/2 multiplying the first inequality of Theorem 1 a typo?

LikeLiked by 1 person

You are right! Fixed, thanks.

LikeLike