This post is part of the lecture notes of my class “Introduction to Online Learning” at Boston University, Fall 2019. I will publish two lectures per week.

You can find the lectures I published till now here.

In this lecture, we will consider the problem of online linear classification. We consider the following setting:

- At each time step we receive a sample

- We output a prediction of the binary label

of

- We receive the true label and we see if we did a mistake or not

- We update our online classifier

The aim of the online algorithm is to minimize the number of mistakes it does compared to some best fixed classifier.

We will focus on linear classifiers, that predicts with the sign of the inner product between a vector and the input features

. Hence,

. This problem can be written again as a regret minimization problem:

where . It should be clear that these losses are non-convex. Hence, we need an alternative way to deal with them. In the following, we will see two possible approaches to this problem.

1. Online Randomized Classifier

As we did for the Learning with Expert Advice framework, we might think to convexify the losses using randomization. Hence, on each round we can predict a number in and output the label

according with probability

and the label

with probability

. So, define the random variable

Now observe that . If we consider linear predictors, we can think to have

and similarly for the competitor

. Constraining both the algorithm and the competitor to the space of vectors where

for

, we can write

Hence, the surrogate convex loss becomes and the feasible set is any convex set where we have the property

for

.

Given that this problem is convex, assuming to be bounded w.r.t. some norm, we can use almost any of the algorithms we have seen till now, from Online Mirror Descent to Follow-The-Regularized-Leader (FTRL). All of them would result in

regret upper bounds, assuming that

are bounded in some norm. The only caveat is to restrict

in

. One way to do it might be to consider assuming

and choose the feasible set

.

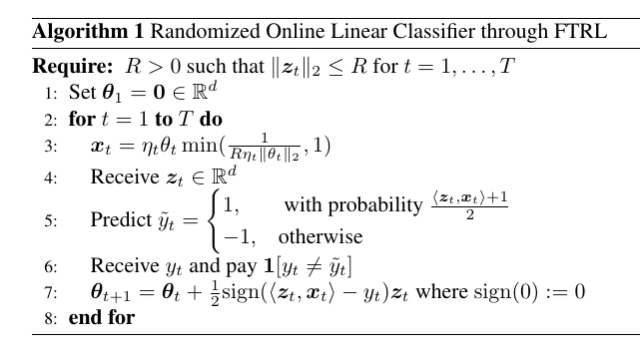

Putting all together, for example, we can have the following strategy using FTRL with regularizers .

Theorem 1 Let

an arbitrary sequence of samples/labels couples where

and

. Assume

, for

. Then, running the Randomized Online Linear Classifier algorithm with

where

, for any

we have the following guarantee

Proof: The proof is straightforward from the FTRL regret bound with the chosen increasing regularizer.

2. The Perceptron Algorithm

The above strategy has the shortcoming of restricting the feasible vectors in a possibly very small set. In turn, this could make the performance of the competitor low. In turn, the performance of the online algorithm is only close to the one of the competitor.

Another way to deal with the non-convexity is to compare the number of mistakes that the algorithm does with a convex cumulative loss of the competitor. That is, we can try to prove a weaker regret guarantee:

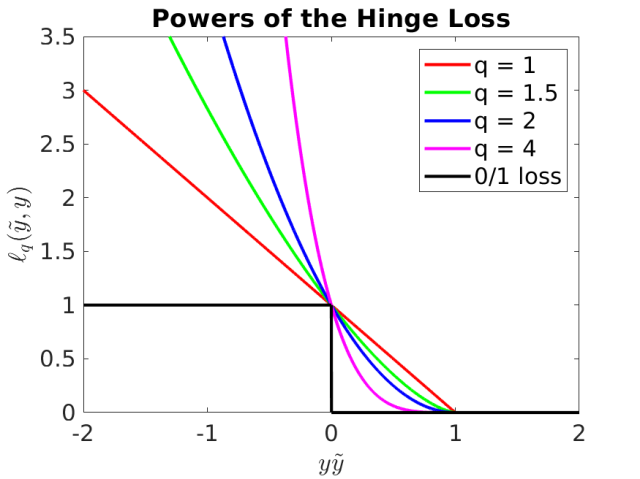

In particular, the convex loss we consider is powers of the Hinge Loss: . The hinge loss is a convex upper bound to the 0/1 loss and it achieves the value of zero when the sign of the prediction is correct and the magnitude of the inner product is big enough. Moreover, taking powers of it, we get a family of functions that trade-offs the loss for the wrongly classified samples with the one for the correctly classified samples but with a value of

, see Figure 1.

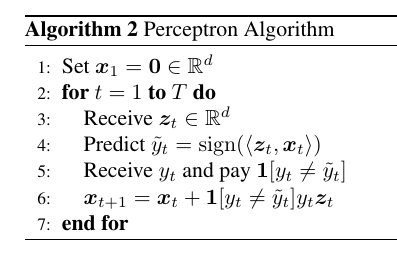

The oldest algorithm we have to minimize the modified regret in (1) is the Perceptron algorithm, in Algorithm 2.

The Perceptron algorithm updates the current prediction moving in the direction of the current sample multiplied by its label. Let’s see why this is a good idea. Assume that

and the algorithm made a mistake. Then, the updated prediction

would predict a more positive number on the same sample

. In fact, we have

In the same way, if and the algorithm made a mistake, the update would result in a more negative prediction on the same sample.

For the Perceptron algorithm, we can prove the following guarantee.

Theorem 2 Let

an arbitrary sequence of samples/labels couples where

and

. Assume

, for

. Then, running the Perceptron algorithm we have the following guarantee

Before proving the theorem, let’s take a look to its meaning. If there exists a such that

, then the Perceptron algorithm makes a finite number of mistakes upper bounded by

. In case that are many

that achieves

we have that the finite number of mistakes is bounded the norm of the smallest

among them. What is the meaning of this quantity?

Remember that a hyperplane represented by its normal vector divides the space in two half spaces: one with the points

that give a positive value for the inner product

and other one where the same inner product is negative. Now, we have that the distance of a sample

from the hyperplane whose normal is

is

Also, given that we are considering a that gives cumulative hinge loss zero, we have that that quantity is at least

. So, the norm of the minimal

that has cumulative hinge loss equal to zero is inversely proportional to the minimum distance between the points and the separating hyperplane. This distance is called the margin of the samples

. So, if the margin is small, the Perceptron algorithm can do more mistakes than when the margin is big.

If the problem is not linearly separable, the Perceptron algorithm satisfies a regret of , where

is the loss of the competitor. Moreover, we measure the competitor with a family of loss functions and compete with the best

measured with the best loss. This adaptivity is achieved through two basic ingredients:

- The Perceptron is independent of scaling of the update by a hypothetical learning rate

, in the sense that the mistakes it does are independent of the scaling. That is, we could update with

and have the same mistakes and updates because they only depend on the sign of

. Hence, we can think as it is always using the best possible learning rate

.

- The weakened definition of regret allows to consider a family of loss functions, because the Perceptron is not using any of them in the update.

Let’s now prove the regret guarantee. For the proof, we will need the two following technical lemmas.

Lemma 3 (F. Cucker and D. X. Zhou, 2007, Lemma 10.17) Let

be such that

. Then

Proof: Let , then we have

. Solving for

we have

. Hence,

.

Proof: } Denote by the total number of the mistakes of the Perceptron algorithm by .

First, note that the Perceptron algorithm can be thought as running Online Subgradient Descent (OSD) with a fixed stepsize over the losses

over

. Indeed, OSD over such losses would update

Now, as said above, does not affect in any way the sign of the predictions, hence the Perceptron algorithm could be run with (2) and its predictions would be exactly the same. Hence, we have

Given that this inequality holds for any , we can choose the ones that minimizes the r.h.s., to have

Note that . Also, we have

So, denoting by , we can rewrite (3) as

where we used Holder’s inequality and .

Given that and denoting by

, we have

Let’s now consider two cases. For , we can use Lemma 4 and have the stated bound. Instead, for

, using Lemma 3 we have

that implies

Using the fact that , we have

Finally, using Lemma 4, we have the stated bound.

3. History Bits

The Perceptron was proposed by Rosenblatt (F. Rosenblatt, 1958). The proof of convergence in the non-separable case for is by (C. Gentile, 2003) and for

is from (Y. Freund and R. E. Schapire, 1999). The proof presented here is based on the one in (Beygelzimer, A. and Orabona, F. and Zhang, C., 2017).

Hi Francesco, in Algorithm 1 line 3 when we do the projection shouldn’t R be in the denominator?

Nicolò

LikeLike

Hi Nicolò, no, this looks correct to me: the first term in the min is selected when the norm is bigger than R, and projected back to R, no?

LikeLike

But didn’t we assume the norm of x bounded by 1 / R ? you say “choose the feasible set {V=\{{\boldsymbol x} \in{\mathbb R}^d : \|{\boldsymbol x}\|\leq \frac{1}{R}\}}. “

LikeLiked by 1 person

You are right! Fixed, thanks!

LikeLike