This post is part of the lecture notes of my class “Introduction to Online Learning” at Boston University, Fall 2019. I will publish two lectures per week.

You can find the lectures I published till now here.

Today, we will present the problem of multi-armed bandit in the adversarial setting and show how to obtain sublinear regret.

1. Multi-Armed Bandit

This setting is similar to the Learning with Expert Advice (LEA) setting: In each round, we select one expert and, differently from the full-information setting, we only observe the loss of that expert

. The aim is still to compete with the cumulative loss of the best expert in hindsight.

As in the learning with expert case, we need randomization in order to have a sublinear regret. Indeed, this is just a harder problem than LEA. However, we will assume that the adversary is oblivious, that is, he decides the losses of all the rounds before the game starts, but with the knowledge of the online algorithm. This makes the losses deterministic quantities and it avoids the inadequacy in our definition of regret when the adversary is adaptive (see (Arora, R. and Dekel, O. and Tewari, A., 2012)).

This kind of problems where we don’t receive the full-information, i.e., we don’t observe the loss vector, are called bandit problems. The name comes from the problem of a gambler who plays a pool of slot machines, that can be called “one-armed bandits”. On each round, the gambler places his bet on a slot machine and his goal is to win almost as much money as if he had known in advance which slot machine would return the maximal total reward.

In this problem, we clearly have an exploration-exploitation trade-off. In fact, on one hand we would like to play at the slot machine which, based on previous rounds, we believe will give us the biggest win. On the other hand, we have to explore the slot machines to find the best ones. On each round, we have to solve this trade-off.

Given that we don’t observe completely observe the loss, we cannot use our two frameworks: Online Mirror Descent (OMD) and Follow-The-Regularized-Leader (FTRL) both needs the loss functions or at least lower bounds to them.

One way to solve this issue is to construct stochastic estimates of the unknown losses. This is a natural choice given that we already know that the prediction strategy has to be a randomized one. So, in each round we construct a probability distribution over the arms

and we sample one action

according to this probability distribution. Then, we only observe the coordinate

of the loss vector

. One possibility to have a stochastic estimate of the losses is to use an importance-weighted estimator: Construct the estimator

of the unknown vector

in the following way:

Note that this estimator has all the coordinates equal to 0, except the coordinate corresponding the arm that was pulled.

This estimator is unbiased, that is . To see why, note that

and

. Hence, for

, we have

Let’s also calculate the (uncentered) variance of the coordinates of this estimator. We have

We can now think of using OMD with an entropic regularizer and the estimated losses. Hence, assume and set

defined as

, that is the unnormalized negative entropy. Also, set

. Using the OMD analysis, we have

We can now take the expectation at both sides and get

We are now in troubles, because the terms in the sum scale as . So, we need a way to control the smallest probability over the arms.

One way to do it, is to take a convex combination of and a uniform probability. That is, we can predict with

, where

will be chosen in the following. So,

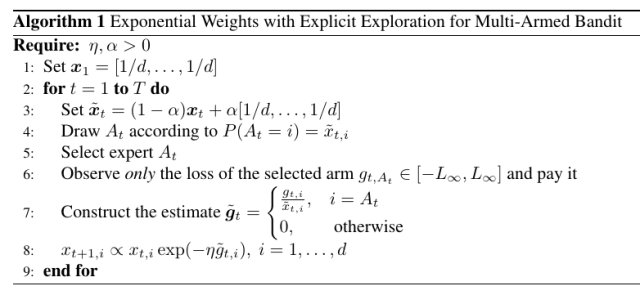

can be seen as the minimum amount of exploration we require to the algorithm. Its value will be chosen by the regret analysis to optimally trade-off exploration vs exploitation. The resulting algorithm is in Algorithm 1.

The same probability distribution is used in the estimator:

We can have that . However, we pay a price in the bias introduced:

Observing that , we have

Putting together the last inequality and the upper bound to the expected regret in (2), we have

Setting and

, we obtain a regret of

.

This is way worse than the of the full-information case. However, while it is expected that the bandit case must be more difficult than the full information one, it turns out that this is not the optimal strategy.

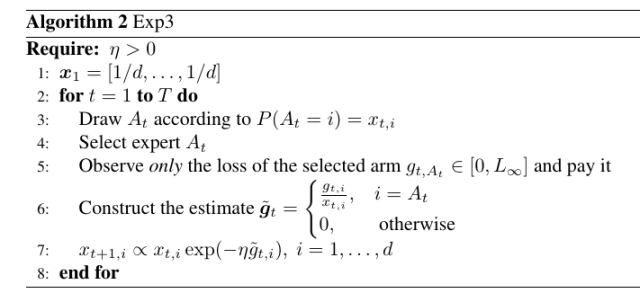

2. Exponential-weight algorithm for Exploration and Exploitation: Exp3

It turns out that the algorithm above actually works, even without the mixing with the uniform distribution! We were just too loose in our regret guarantee. So, we will analyse the following algorithm, that is called Exponential-weight algorithm for Exploration and Exploitation (Exp3), that is nothing else than OMD with entropic regularizer and stochastic estimates of the losses. Note that now we will assume that .

Let’s take another look to the regret guarantee we have. From the OMD analysis, we have the following one-step inequality that holds for any

Let’s now focus on the term . We said that for a twice differentiable function

, there exists

such that

, where

. Hence, there exists

such that

and

So, assuming the Hessian in to be positive definite, we can bound the last two terms in the one-step inequality of OMD as

where we used Fenchel-Young inequality with the function and

and

.

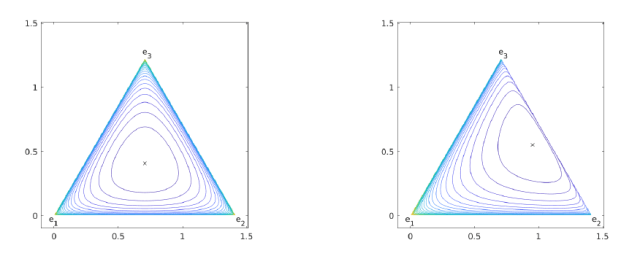

(left) and when

(right).

is small, we have a smaller growth of the divergence. This can be seen also graphically in Figure 1. Indeed, for the entropic regularizer, we have that the Hessian is a diagonal matrix:

This expression of the Hessian a regret of

where and

. Note that for any

is in the simplex, so this upper bound is always better than

that we derived just using the strong convexity of the entropic regularizer.

However, we don’t know the exact value of , but only that it is on the line segment between

and

. Yet, if you could say that

, in the bandit case we would obtain an expected regret guarantee of

, greatly improving the bound we proved above!

In the next lecture, we will see an alternative way to analyze OMD that will give us exactly this kind of guarantee for Exp3 and will use give us the optimal regret guarantee using the Tsallis entropy in few lines of proof.

3. History Bits

The algorithm in Algorithm 1 is from (Cesa-Bianchi, N. and Lugosi, G. , 2006, Theorem 6.9). The Exp3 algorithm was proposed in (Auer, P. and Cesa-Bianchi, N. and Freund, Y. and Schapire, R. E., 2002).