We now consider online learning with delayed feedback. We consider a constant delay of length , where at time

the learner has only observed

before producing

. In other words, the learner observes

at time

. This means that the algorithm receives its first feedback at round

and it receives no feedback before that round. We can expect that the delay will increase the regret of the algorithm and one can show that the optimal regret should depend on

as

(Weinberger&Ordentlich, 2002). Let’s see how to achieve this optimal dependency.

1. Delay as Bad Hints

Instead of designing online algorithms specifically for the case of delayed feedback, we will reduce the setting of online learning with delays to the one of optimistic online learning, that is, when we receive the hint at each round

and we use it in optimistic algorithms. In particular, using FTRL with linearized losses with delays means that we predict with

for

and

On the other hand, in Optimistic FTRL without delays and linearized losses we predict with

Hence, the two updates are equivalent if we set . In other words, we are receiving a very bad hint that corresponds to the delayed feedback.

In the same way, we have that in OMD with delays we predict the same for the first

rounds and

On the other hand, Optimistic OMD without delays updates with

where . So, the two are equivalent by setting

. So, unrolling, we have

.

The above observations are very powerful because they can immediately give us the regret guarantee of FTRL and OMD with delays. Indeed, considering FTRL, assuming that the regularizer is

-strongly convex with respect to

, we have

The result for OMD with delays is similar. The optimal tuning of would give us a

regret upper bound, but a linear dependency on

. Unfortunately, according to what we said above, this is suboptimal…

Where is the problem? Is the reduction from delays to optimistic updates suboptimal? This seems unlikely, given that the reduction is exact. Instead, the problem is somewhere else: The optimistic bounds we have are suboptimal!

So, in the next two sections, we show a better bound for Optimistic OMD and Optimistic FTRL, which will imply the optimal bound for OMD and FTRL with delays.

2. Improved Optimistic OMD Bound

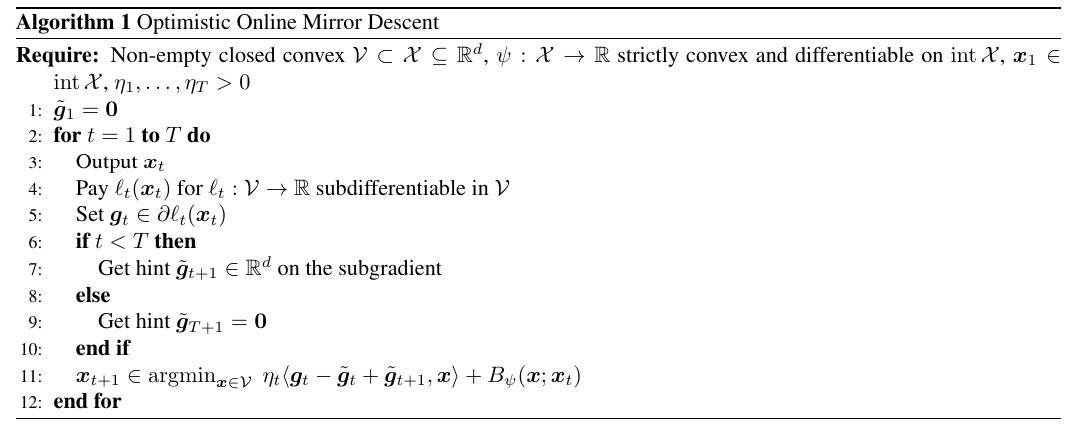

As we already saw, the following one is the pseudo-code of Optimistic OMD.

Note that setting is not a limitation because setting it to any other value would be equivalent to changing the arbitrary initial point

. In the same way, setting

does not change in any way the behaviour of the algorithm in round

, but it is needed to simplify the analysis.

We can now state its improved regret guarantee.

Theorem 1. Let

be the Bregman divergence with respect to

and assume

to be proper, closed, and

-strongly convex with respect to

in

. Let

be a non-empty closed convex set. With the notation in Algorithm 1, assume each

exists and lies in

. Assume

for

. Define

. Then,

, the following regret bounds hold

Moreover, if

is constant, i.e.,

, we have

To prove this improved theorem, we will use the following technical lemmas.

Lemma 2. Assume

to be

-strongly convex w.r.t.

and

. Let

be convex. Let

. Then, we have

Proof: Observe that from the optimality condition on , we have

Hence, we have

where in first inequality we used the strong convexity of , (1) in the second one, and the definition of dual norms in the last one. Reordering, we have the stated bound.

Lemma 3. Let

and

. Then, we have

Proof:

The second inequality is obtained by lower bounding the max with its two possible values and overapproximating.

We can now prove the Theorem.

Proof: We can use the one-step lemma for OMD with , to have

Summing over the l.h.s., we obtain

Summing the r.h.s., we have that

Finally, observe that

Telescoping the terms with the Bregman divergences gives the first bound.

Now, using the strong convexity of , we have

From Lemma 2, we know that is upper bounded by

. Hence, we have

Using Lemma 3 gives the second bound.

The bound for fixed is proved in a similar way.

The advantage of these bounds is that the terms in the sum only depends linearly on bad hints, rather than quadratically. Next, we will use exactly this property to obtain the optimal regret guarantee for OMD with delayed feedback.

From Optimistic OMD to Delayed OMD. We now show the improved bound for OMD with delays and constant learning rate. The delay-to-optimism conversion says that we have to set for

, yet we must set

for the Optimistic OMD proof. Hence, we obtain a reduced term in the sum for the first

rounds:

That is, assuming bounded for all

, we improved the worst-case dependency in the dominant term of the bound from

to

. Choosing the learning rate in the optimal way, we obtain the optimal regret guarantee of

.

3. Improved Optimistic FTRL Bound for Linear Losses

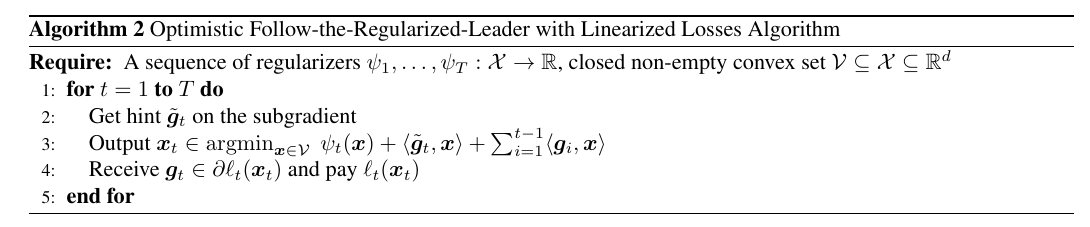

We now prove a similar improved regret guarantee for Optimistic FTRL with linearized losses, whose pseudo-code is the following one.

Here, we will give an improved version of the regret bound for Optimistic FTRL with linearized losses. The analysis will use an auxiliary sequence of predictions, and then relate these predictions to the ones of Optimistic FTRL.

Define for

the auxiliary sequence of predictions obtained with Optimistic FTRL with linearized losses with losses

and hints

. Given that this auxiliary sequence is only used in the analysis, we do not have to specify the choice of the hints

. Instead, we will leave them free, and state the final bound for any of these choices. Formally, defining

we have

.

Theorem 4. With the notation in Algorithm 2, let

be convex, closed, non-empty. For

, if

is closed, subdifferentiable, and

-strongly convex with respect to

in

, then

exists and is unique. Moreover, if in addition

pointwise for all

, we have

for all

and all

for

.

To prove this theorem we will need the following stability lemma for FTRL.

Lemma 5. Assume

to be

-strongly convex with respect to

. Define

and

. Then,

.

Proof: Define and

. From the strong convexity of

, we have

Hence, summing these two inequalities, we have

that completes the proof.

We can now prove Theorem 4.

Proof: Using the regret bound for optimistic FTRL on the predictions , we can now upper bound the regret of

as

Now, we focus our attention on . From Lemma 5, we have

.

Using this inequality in the regret bound above, for any and any

, we have

Remember that is only used in the analysis. So, this upper bound holds for any choice of

.

Note that the minimization with respect to can be solved only with the knowledge of the dual norm used. However, we have the following upper bound (proof left as exercise, see Exercise 1)

From Optimistic FTRL to Delayed FTRL. For our aim of analyzing online learning with delays, we can choose . Then, using the obtained bound in the delay-to-optimism conversion, we have that FTRL with delayed linearized losses and increasing regularizer satisfies

That is, assuming that the are bounded, we improved the dependency in the sum from

to

. Choosing the strong convexity of the regularizer in the optimal way, we obtain the optimal regret guarantee of

.

4. Conclusion

I have described only the basic idea behind the reduction from online learning with delayed feedback to optimistic updates with bad hints. It should be immediate to see that this reduction allows us to use any variant for optimistic updates to study delayed updates. For example, it is now immediate to use data-depedent learning rates or regularizers to obtain tigher regret bounds under particular situations.

5. History Bits

Weinberger&Ordentlich (2002) were the first to analyze the delayed feedback problem. They considered the adversarial full information setting with a fixed, known delay . They achieved the optimal rate by proposing a black-box reduction, by running

online algorithms on subsampled sequences. Zinkevich et al. (2009) analyzed OMD with delayed feedback and obtained the optimal regret. Joulani et al. (2013) unified most of the prior research on the effect of delay in adversarial and stochastic problems. McMahan&Streeter (2014) studied a variant of AdaGrad with delays. Quanrud&Khashabi (2015) studied OGD and FTRL with adversarially chosen delays, while Joulani et al. (2016) studied OMD and FTRL-Proximal with adaptive learning rates.

The equivalence of delays/bad hints and the improved regret guarantees for Optimistic FTRL and Optimistic OMD are due to Flaspohler et al. (2021). However, their OMD bound contains a small mistake: They are missing the last terms in the bound, due to the fact that we set the breaking the equivalence between optimistic and delayed updates for

. Note that while the choice of

does not influence the algorithm in the rounds

, its value appears in the regret upper bound. (As soon as we have time, we will fix the arxiv version of Flaspohler et al. (2021)…) Flaspohler et al. (2021) also show that RM and RM+ automatically adapts to the delay

.

Lemma 2 is from Joulani et al. (2016).

Acknowledgments

Thanks for ChatGPT 5.2 for checking the proofs. (Yet, I found the mistake in the previous paper, while ChatGPT 5.2 was claiming that everything was correct!)

6. Exercises

Exercise 1. Let

and

a norm. Then, show that