This is the last post in this series to show that online learning is more than online learning. This time it is about using an online linear optimization algorithm to minimize non-convex, non-smooth functions with access to stochastic gradients. Unlike in the previous two posts, here we will actually use the online algorithm itself, not just its regret guarantee. Nevertheless, the same philosophy applies: a regret guarantee is a statement that the sequence produced by the online algorithm has certain useful properties. So, even though the online linear optimization algorithm only minimizes linear functions, it can be used to design an optimization algorithm for non-convex functions.

1. From Online Learning to Non-Convex Non-Smooth Optimization

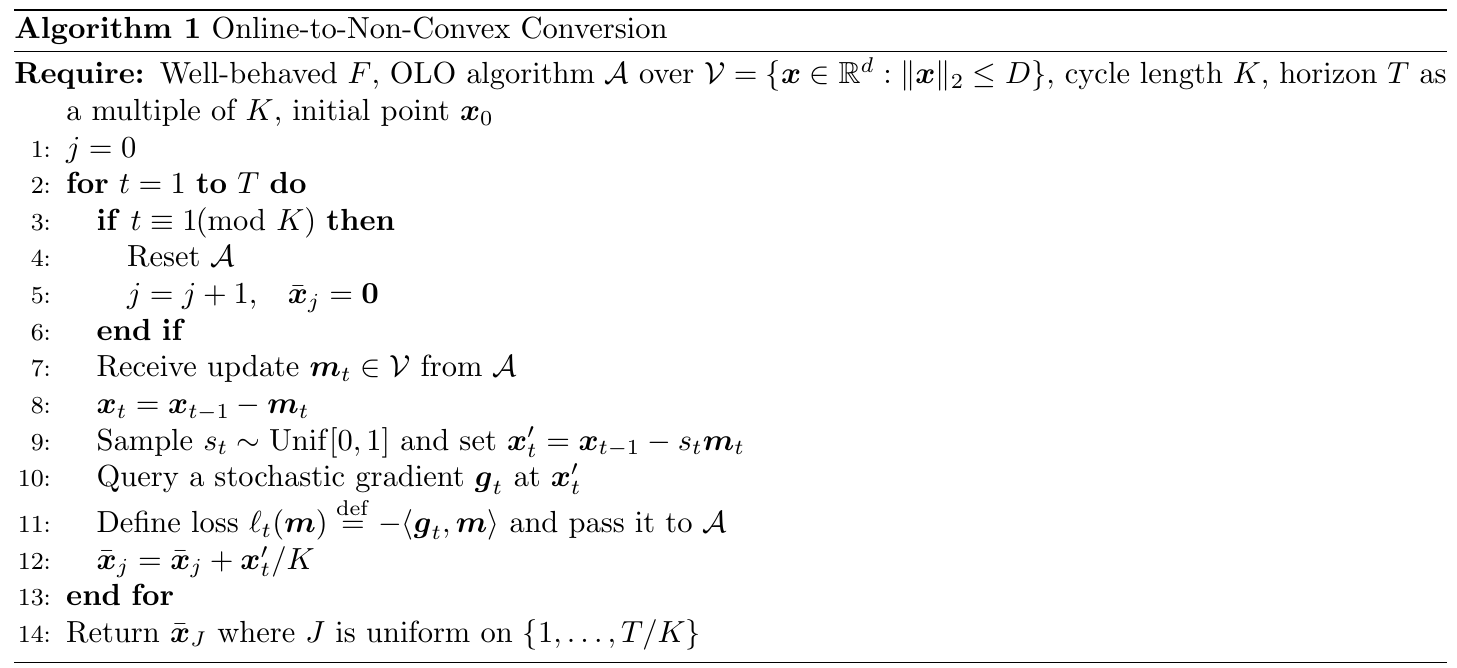

This section describes a simple (and surprisingly sharp) reduction from Online Linear Optimization (OLO) to the task of finding approximate stationary points of non-convex, non-smooth objectives. The key idea is to feed stochastic gradients to an online algorithm, which then decides the updates rather than the iterates.

We consider the objective function , which is differentiable but not necessarily smooth, i.e., the gradient map might not be Lipschitz.

Example 1. Consider

defined as

. Then,

, where

. Hence,

is differentiable everywhere, but its derivative is not Lipschitz because

is unbounded when

.

To connect function values to gradients without convexity or smoothness, we isolate the only calculus identity needed by the reduction. So, we will define well-behaved functions as those that satisfy the specific equality we need.

Definition 1 (Well-behaved function). A differentiable function

is well-behaved if for every

,

Remark 1. Identity (1) is immediate for smooth functions (by the fundamental theorem of calculus applied to

), but it can hold well beyond smooth objectives. In particular, Cutkosky et al. (2023) show that if

is locally Lipschitz then an arbitrarily small randomized smoothing yields a differentiable well-behaved surrogate with a natural stochastic gradient oracle. Here, we focus on the differentiable well-behaved case to keep the presentation simple.

We now define our notion of optimality. Since is non-convex and not necessarily smooth, small gradients are not obviously implied by function decrease; we instead certify a local averaged stationarity notion that measures how close we are to a local minimum by considering local averages of gradients.

Definition 2 (Barycentric

-stationarity). Let

be differentiable almost everywhere, and let

. Let

be the set of random variables

with finite support in the ball of radius

around

, such that

exists for all

in the support of

. Define

We say that

is a barycentric

-stationary point if

.

Remark 2. This notion is closely related to Goldstein-type

-subdifferential stationarity, but we use gradients, and it is barycentric: the same convex weights used to average gradients must also average the sampled points back to

.

1.1. The Reduction Algorithm

We now present the reduction from non-convex optimization of well-behaved functions to online linear optimization.

Fix a cycle length and a radius

. We will run an OLO algorithm

on the Euclidean ball

, where the OLO algorithm outputs updates

. The iterates are defined by

. Then, at each step we draw a random point

on the segment from

to

and query a stochastic gradient there. The final output

is the average of

points

inside a random cycle

. The complete procedure is given in Algorithm 1.

Theorem 3. Let

be well-behaved (Definition 1). Let

be the sigma-field generated by all randomness up to round

before querying

, so that

is

-measurable. With the notation in Algorithm 1, we assume

for some

. Let

be a multiple of the cycle length

. Run Algorithm 1 for

steps with cycle length

, and instantiate

as projected \ac{OGD} over

with learning rate

and initial point equal to

on each cycle (i.e., after each reset). Then,

In particular, for

, choose

and then set

rounded to an integer. Then,

Proof: We first prove the key identity linking change in function value to an expected gradient. By Definition 1, we have

where the second to last equality is due to the fact that is uniform on

.

Taking expectation over all randomness up to time (including

) and for any

, we have

where in the second to last equality we used that is determined before querying

, together with the unbiasedness condition

.

We now analyze one cycle, and then sum over cycles. Fix a cycle and let its time indices be

. Summing (3) over

, we obtain

We now focus on each term on the r.h.s. of this equality.

For the first term on the r.h.s. of (4), for any , from the regret guarantee of projected Online Gradient Descent (OGD) with learning rate

and initial point equal to

, we have

Since this inequality holds for every , we can use a hindsight choice depending on the gradients in cycle

. Moreover, taking expectation and using the definition of

, we have

Now, we choose as

Our choice of guarantees

, so the second term of the r.h.s. of (4) becomes

Now, we upper bound the last term in (4). First, observe that

and

Hence, we have

where we used Cauchy–Schwarz’s inequality and Jensen’s inequality.

Putting everything together, we have

Summing over telescopes the function values:

Dividing by gives the stated bound.

Now observe that within each cycle we have . Hence all points

lie in the convex hull of

whose diameter is at most

(triangle inequality over at most

steps). Since

, every

lies at distance at most the diameter of the set from

, i.e.,

. Therefore, if

, then for all

in cycle

we have

. By Definition 2,

Sampling uniformly over cycles and taking expectation yields the stated barycentric

-stationarity bound. Choosing

to balance the two terms (with

) gives the stated rate.

It is interesting to compute the update in Algorithm 1 when

is projected OGD:

This is reminiscent of the Stochastic Gradient Descent (SGD) update with momentum and clipping, which are common heuristics for optimizing non-convex objectives in deep learning. Yet, here the update comes naturally from the theory.

We can also prove that this bound is optimal. To see this, we consider smooth functions and the following lemma.

Proof: Since , for any

there exists

such that

.

By definition of , we have

. So, by

-smoothness, for every

, we have

. Hence,

Therefore, . Since this holds for every

, we conclude that

.

Now, recall that Theorem 3 shows that we can find a barycentric stationary point in

iterations. Thus, Lemma 4 implies that by setting

, we can find an

-stationary point of an

-smooth objective

in

iterations, which matches the optimal guarantee of standard SGD. Hence, the

rate in Theorem 3 is optimal for all

of the order of

.

2. History Bits

Zhang et al. (2020) proposed to use the Goldstein stationarity condition (Goldstein, 1977) for non-convex non-smooth objectives. They also proved a suboptimal bound of . The barycentric definition, Theorem 3, and Lemma 4 are from Cutkosky et al. (2023). Note that I changed Definition 2 a bit because Algorithm 1 can return repeated points, that would not be allowed by the original definition in Cutkosky et al. (2023). The barycentric part of the definition is not strictly necessary for the results I presented, yet it is necessary for the additional results in Cutkosky et al. (2023) on functions whose Hessian is Lipschitz. Zhang&Cutkosky (2024) improved the reduction in Algorithm 1 using an exponentially distributed

, which allows one to query the gradient at

rather than at the intermediate point

, and removes the feasible set

. Jordan et al. (2023) proved that the randomization is essential in this class of problems to achieve dimension-free rates.

Acknowledgments

Thanks to ChatGPT for checking my post.