We continue our journey into algorithms that predicts distributions and this time we talk about the Aggregating Algorithm.

1. The Aggregating Algorithm and Mixable Losses

Here, we show how to extend the Weighted Average Algorithm (WAA) we saw last time to a larger class of loss functions. We will assume to be a probability density with respect to a measure

of the set

, the domain of the losses. As before, for the set

, we define by

the set of all probability distributions with support on

In the case of -exp-concave losses, we used the fact that

for all . Now, we want to extend the class of functions where we can use a similar inequality. So, we introduce a more general class of losses, the

-mixable ones.

Definition 1. Let

. We say that

is

-mixable if there exists a mapping

called substitution function such that

It is clear that the proof of WAA we saw last time holds for and

, instead of

. Moreover, every exp-concave function is mixable because the substitution function is

. This implies that all the following regret guarantees hold for exp-concave functions too.

Proposition 2. Let

and

. If

is

-mixable, then

is

-mixable for any

.

Proof: To prove that is

-mixable, it is enough to show that for

we have

. Observe that

where in the inequality we used Jensen’s inequality on the convex function .

There is an additional caveat: The substitution function here depends on and in online learning we only know the loss function after producing our prediction. So, in our setting we need to find a generic substitution function that holds for a class of loss functions.

Hence, we consider where

is decided adversarially and we require

An example of such losses is given in the following proposition.

Proposition 3. Define the softmax

as

. The multiclass logistic loss, also referred to as softmax-cross-entropy loss,

, defined as

, is 1-mixable.

Proof: The proof is by construction: Define the mapping as defined as

for any distribution

on

, where the logarithm is entry-wise. So, for any

, we have

where the second equality uses the fact that for any ,

. Thus,

is 1-mixable.

It is worth stressing that not all the convex losses are mixable.

Proposition 4. The loss

, where

is not mixable.

Proof: To show mixability, we would need to show that there exists and a substitution function

such that

Consider the case that and

. We need to find a

and

such that

Let’s calculate the expectations:

Hence, we have

Summing these two inequalities, we have

Solving for , we have

that has the only solution

. Hence, the function is not mixable.

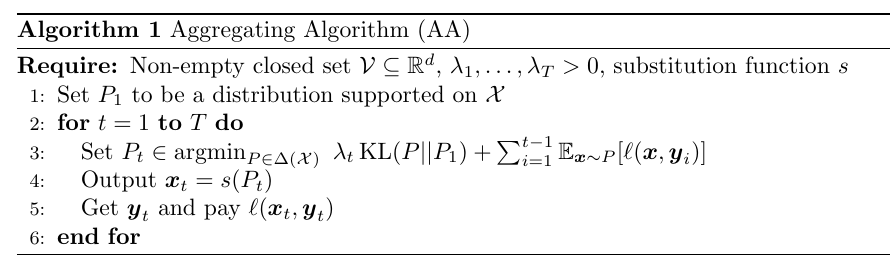

Equipped with this definition, we can introduce the Aggregating Algorithm in Algorithm 1. Its regret guarantee is the following one.

Theorem 5. Assume

to be

-mixable in the first argument for all

, and

for all

, then Algorithm 1 satisfies

Moreover, we also have

As we did for WAA last time, under additional assumptions we can also give a regret bound with respect to a deterministic competitor.

Theorem 6. Let

a non-empty closed convex set in

and

, where

is an arbitrary norm. Let

and assume that

is

-Lipschitz w.r.t.

and

-mixable. Then, the Aggregating Algorithm in Algorithm 1 with a sequence of

such that

for all

and

as the uniform distribution on

satisfies

Proof: We start from the second bound in Theorem 5. Fix ,

, and define

. Choose

in

and 0 otherwise, where

. Remember that

is the uniform distribution, so

is uniform over

. So, we have

Putting all together, we have

Now, we set such that

. In this way, we have

and

, that gives the stated bound.

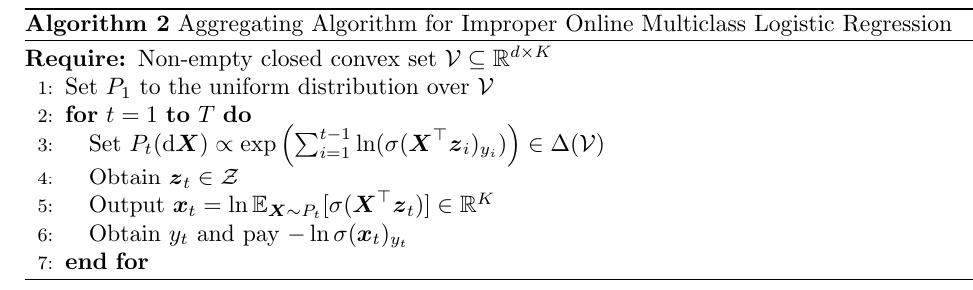

2. Example of AA: Online Multiclass Logistic Regression

In this section, we show an application of AA showing how to obtain logarithmic regret for online multiclass logistic regression. In this problem, in each round we receive a covariate and we produce a discrete probability distribution over the

classes as

. Then, we receive the true class

and we pay the loss

.

We could use a linear classifier, where

is the linear classifier at time

. Assuming that the covariates

have bounded norm and that the columns of the matrices in

has bounded norm

, one can show that this problem has exp-concave losses, so we could use the ONS or the WAA algorithm. Unfortunately, the exp-concavity is of the order of

.

Here, we show that we can prove a logarithmic bound that depends only logarithmically on . The price that we pay for this improved rate is that the algorithm we will use is improper, that is our predictor will not be linear in the covariates

, but we will still measure its regret with respect to a linear competitor.

Here, we will proceed a little bit differently than in the standard AA algorithm because we will use two loss functions, and

that satisfy a generalized form of mixability:

It is easy to see that Theorem 5 extends to this case:

Similarly, we have that if is

-Lipschitz in its first argument, we have the corresponding version of Theorem 6:

In particular, from Proposition 3, we will define ,

, and

.

Theorem 7. Assume that

for all

and

and

. Then, Algorithm 2 satisfies

Proof: We have that

Defining as the infinity norm of the L2 norm of the columns of

, we have that

. So, we have that

is

-Lipschitz w.r.t. the

. The bound on the diameter of

with respect to the same norm is

. So, using (1) with

, we obtain the stated result.

6. History Bits

The AA was introduced in Vovk (1990) (see also Vovk (1998, Appendix A) for an easier description of the algorithm).

The observation that the AA also works for infinite sets of experts was made by Freund (1996), Freund (2003).

The concept of mixability is introduced in Vovk (2001). Proposition 2 is in the proof of Vovk (1998, Lemma 9).

The mixability of the logistic loss, Proposition 3, and the content of Section 2 are from Foster et al. (2018). Theorem 6 is a generalization of a similar one for the logistic loss from Foster et al. (2018).

Acknowledgments

Thanks to Wei-Cheng Lee for feedback on a prelimary version of this post and to Gemini 2.5 and ChatGPT5 for checking all the proofs.